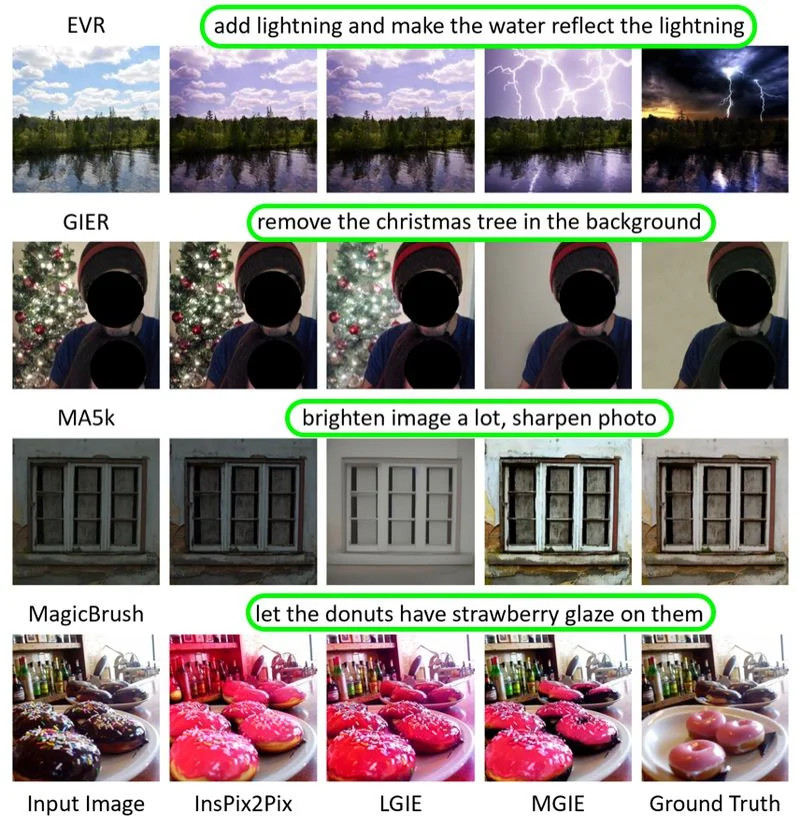

Apple has unveiled MGIE, a new AI-based image editing tool that lets users edit images using text commands. Named MLLM-Guided Image Editing, it offers features like Photoshop-style edits, global optimization, and local edits.

This release comes shortly after Apple’s announcement during a quarterly earnings call about its increased focus on generative AI. The tool, developed in collaboration with researchers from the University of California, Santa Barbara, was presented at the International Conference on Learning Representations (ICLR) 2024, showcasing advancements in AI-powered image editing.

MGIE is now accessible as an open-source initiative on GitHub, offering users access to the code, data, and pre-trained models. Additionally, a demo notebook is available to guide users on utilizing MGIE for different editing tasks. For those interested, there is an online option to experiment with MGIE through a web demo hosted on Hugging Face Spaces, a platform designed for sharing and collaborating on machine learning (ML) projects.

MGIE allows users to input editing requests by typing, covering basic adjustments to advanced changes like reshaping objects or enhancing brightness in specific image areas. The model utilizes multimodal language processing, understanding user inputs and producing corresponding edits accordingly.

Multimodal AI has achieved a significant breakthrough with the emergence of MGIE. However, experts acknowledge that there is still room for improvement in such systems. The rapid pace of progress indicates that assistive AI, exemplified by MGIE, could soon become an essential creative companion.

Although MGIE is not yet integrated into Apple’s devices, it marks the start of Apple’s journey in generative AI and hints at potential future advancements.

Comments